? deeplizard uses music by Kevin MacLeod #The gradient trial? Get a FREE 30-day Audible trial and 2 FREE audio books using deeplizard's link: ? Check out products deeplizard recommends on Amazon: ❤️? Special thanks to the following polymaths of the deeplizard hivemind:

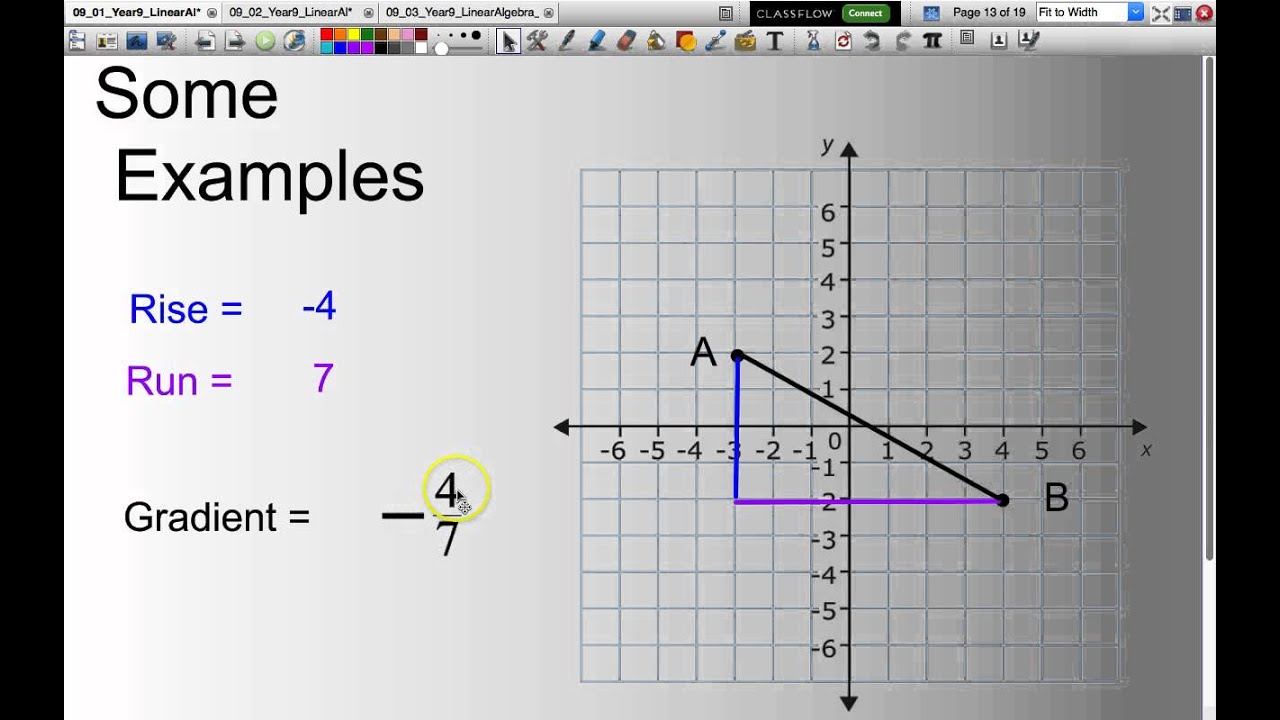

? Available for members of the deeplizard hivemind: ? Hey, we're Chris and Mandy, the creators of deeplizard! Lastly, we'll take the results from each term and combine them to obtain the final result, which will be the gradient of the loss function.Ġ0:00 Welcome to DEEPLIZARD - Go to for learning resourcesĠ7:36 Calculation Breakdown - Second termġ3:56 Collective Intelligence and the DEEPLIZARD HIVEMIND We'll see that this equation is made up of multiple terms, so next we'll break down and focus on each of these terms individually. We're first going to start out by checking out the equation that backprop uses to differentiate the loss with respect to weights in the network. And calculating this gradient, is exactly what we'll be focusing on in this video. #The gradient updateRecall from our video that covered the intuition for backpropagation, that, for stochastic gradient descent to update the weights of the network, it first needs to calculate the gradient of the loss with respect to these weights. Now we're going to be using these expressions to help us differentiate the loss of the neural network with respect to the weights. The gradient refers to the change rate or how steep a slope is. In our last video, we focused on how we can mathematically express certain facts about the training process. A gradient is a complicated word for quite a simple concept. We're now on number 4 in our journey through understanding backpropagation. We know that for each node \( j \) in the output layer \( L \), we have Let's look at a single weight that connects node \(2\) in layer \(L-1\) to node \(1\) in layer \(L\). Lastly, we'll take the results from each term and combine them to obtain the final result, which will be the gradient of the loss function.ĭerivative of the Loss Function with Respect to the Weights This will allow us to break down and focus on each of these terms individually. Then, we'll see that this equation is made up of multiple terms. Recall from the episode that covered the intuition for backpropagation that for stochastic gradient descent to update the weights of the network, it first needs to calculate the gradient of the loss with respect to these weights.Ĭalculating this gradient is exactly what we'll be focusing on in this episode. In the last episode, we focused on how we can mathematically express certain facts about the training process. We're now on episode number four in our journey through understanding backpropagation. Hey, what's going on everyone? In this episode, we're finally going to see how backpropagation calculates the gradient of the loss function with respect to the weights in a neural network.

Backpropagation explained | Part 4 - Calculating the gradient

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed